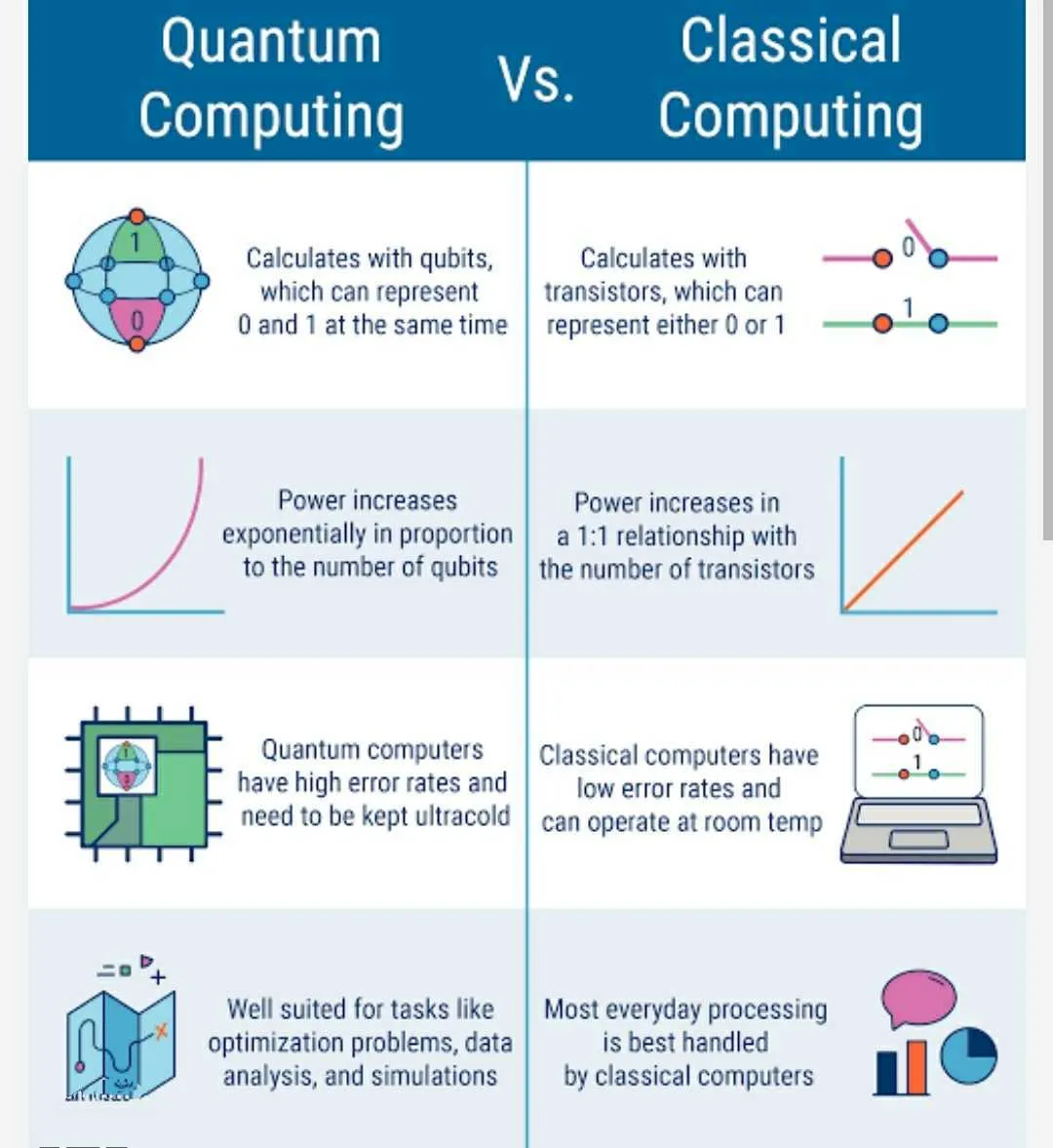

Quantum computing is an emerging field that holds the promise of revolutionizing the way we process information and solve complex problems. Unlike classical computers, which use bits to represent data as either 0 or 1, quantum computers employ quantum bits or qubits, which can exist in multiple states simultaneously, thanks to the principles of quantum mechanics.

The fundamental unit of quantum computing, the qubit, is the building block that sets quantum computers apart. In classical computers, bits can only be in one of two states, representing either a 0 or a 1. However, qubits can be in a superposition of states, meaning they can represent both 0 and 1 simultaneously. This phenomenon allows quantum computers to perform multiple calculations at once, exponentially increasing their processing power compared to classical computers.

One of the key algorithms that showcase the potential of quantum computing is Shor's algorithm. It has the capability to efficiently factorize large numbers, a task that classical computers struggle with, and is the basis of modern encryption methods. Breaking these cryptographic systems would have significant implications for cybersecurity and data privacy.

Another prominent algorithm is Grover's algorithm, which accelerates searching an unsorted database from a classical linear time complexity to a quadratic one. This could be utilized in optimizing search engines or solving optimization problems more efficiently.

However, building and operating quantum computers is an intricate task due to the inherent challenges of quantum mechanics. Quantum systems are highly sensitive to noise and decoherence, which cause errors in calculations. Researchers are working to mitigate these issues through error correction techniques and improving qubit stability.

Various physical implementations of qubits have been explored, such as superconducting circuits, trapped ions, topological qubits, and more. Each approach has its strengths and challenges, and the race to find the most scalable and error-resistant qubits is ongoing.

Several tech giants and startups have invested heavily in quantum computing research, leading to significant advancements. Companies like IBM, Google, Microsoft, and others have developed their quantum processors, offering cloud access to quantum computing resources, making it more accessible to researchers and developers.

Quantum computing also has potential applications in various industries. In drug discovery, quantum computers could simulate molecular interactions more accurately, leading to faster drug development. Optimization problems in logistics, finance, and supply chain management could be solved with unprecedented efficiency. Machine learning and artificial intelligence algorithms could also benefit from quantum computing's enhanced capabilities.

Despite these exciting possibilities, quantum computing is still in its early stages, and practical, large-scale quantum computers are not yet a reality. Researchers are still tackling hardware challenges, developing quantum error correction methods, and refining algorithms to harness the full potential of quantum computing.

Moreover, quantum computing's computational advantages are not universal; it excels in specific tasks while offering no improvement in others. Determining which problems can benefit from quantum computing and which cannot is an ongoing area of research.

As quantum computing progresses, it also raises concerns about its impact on classical cryptography. With the potential to break existing encryption algorithms, it will be essential to develop quantum-resistant cryptographic systems to secure data in the post-quantum era.

This report was published via Actifit app (Android | iOS). Check out the original version here on actifit.io