Testing Open WebUI

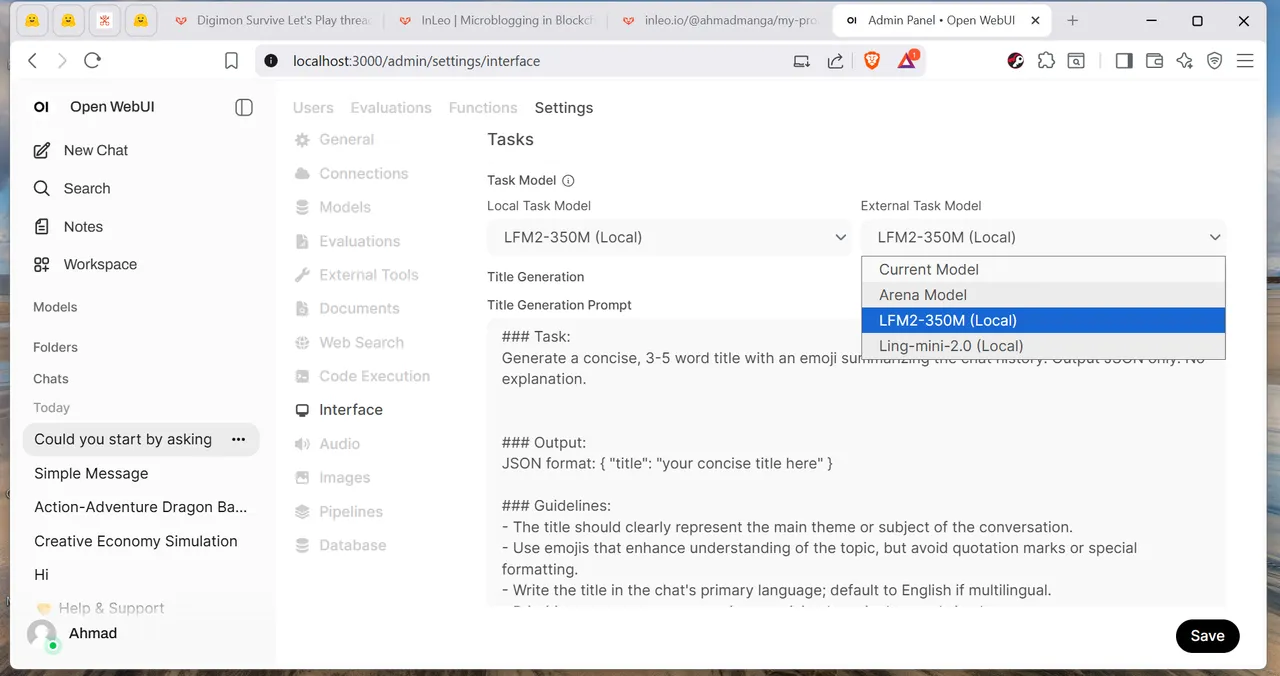

So, after a few days of testing LLMs for local usage, I settled on using Open-WebUI with 2 Koboldcpp instances running. The main instance would run my main MoE (Mixture of Experts) model, (Either Ling-Mini2 or Qwen3-30B-A3B) and the second instance should run a small "Task" model for Title Generation, Tagging and Follow-up questions.

I needed a model smaller than 0.5B for this last task...

From my testing, SmolLM and FunctionGemma did way worse than LFM2-350M so I'm going to stick with it for the foreseeable future. #ai #openwebui #technology #localai

RE: LeoThread 2026-01-01 15-57